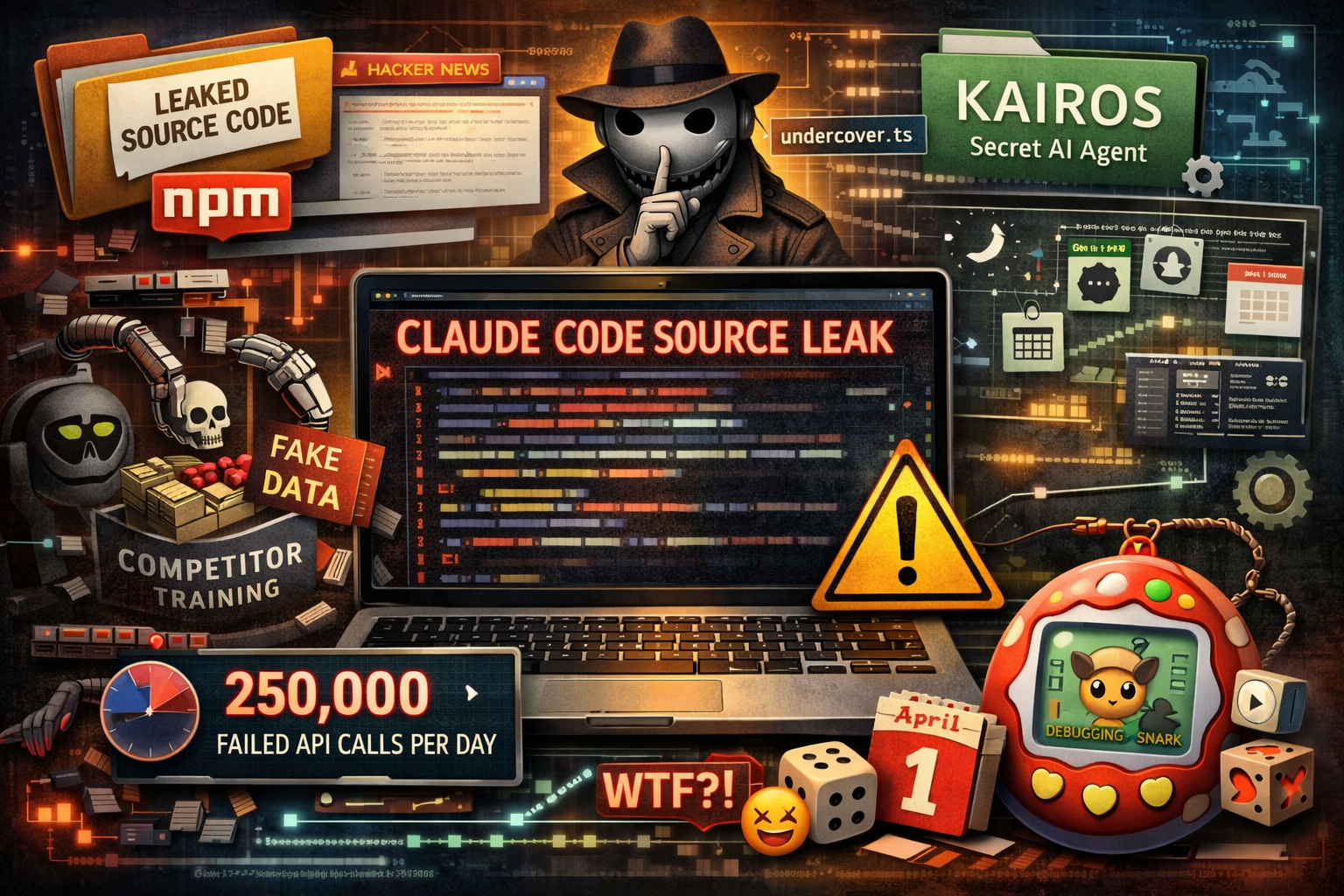

Anthropic accidentally shipped their full Claude Code source code inside an npm package. It was quickly removed, but not before developers mirrored it and spent a morning digging through it on Hacker News. Here’s what they found — in plain English.

Based on the original analysis by Alex Kim.

Poisoning Competitors’ Training Data

Claude Code has a hidden feature that injects fake, decoy tools into its responses when it detects that someone might be recording its output to train a rival AI model. The idea is to corrupt that training data before it can be used. There’s also a second mechanism that replaces the AI’s full reasoning with a short summary, so anyone spying on the traffic never sees the complete picture.

These protections can be worked around without too much effort, so the real deterrent is probably the legal threat rather than the technical barrier.

An AI That Hides It’s an AI

A file called undercover.ts puts Claude Code into a kind of stealth mode when it’s used in public or open-source projects. In this mode, the AI is instructed never to mention Anthropic’s internal codenames, team channels, or even the name “Claude Code” itself.

Most controversially, this mode cannot be turned off — only on. That means commits and pull requests made by Anthropic employees using Claude Code in open-source projects would carry no indication that an AI was involved.

Detecting User Frustration… With a Simple Text Pattern

Claude Code checks whether a user is getting frustrated by scanning their messages for swear words and angry phrases — not with AI, but with a basic text-matching pattern. Words like “wtf,” “this sucks,” “fucking broken,” and similar expressions trigger a frustration flag.

The irony of an AI company using such a low-tech approach for sentiment analysis wasn’t lost on the Hacker News crowd. That said, it’s fast, cheap, and probably gets the job done.

250,000 Wasted API Calls Per Day

A comment buried in the source code revealed that a bug was causing Claude Code to make up to 250,000 unnecessary API calls every single day — in some cases, a single user session was racking up over 3,000 failed attempts in a row. The fix was just three lines of code: stop trying after three consecutive failures. The comment even included the date the data was pulled and the exact number of affected sessions.

A Secret Agent Mode in Development

References throughout the code point to an unreleased feature called KAIROS — what appears to be an always-on, background-running AI agent. Based on the code, it would include nightly memory processing, GitHub integration, scheduled background tasks, and continuous syncing every few minutes.

This was considered the biggest product roadmap reveal from the leak. Competitors now know what Anthropic is building next.

An April Fools’ Joke Hidden in the Code

With April 1st the day after the leak, developers found what looks like a planned joke feature: a Tamagotchi-style companion for each user. Your companion would be a randomly assigned creature from 18 possible species, with rarity tiers, RPG-style stats like “DEBUGGING” and “SNARK,” and even a 1-in-100 chance of getting a rare “shiny” version. The creature names were deliberately obscured in the code to avoid being spotted before launch.

What Does This All Mean?

Some pointed out that competitors like Google and OpenAI have already open-sourced their AI coding tools — so what’s the big deal? The difference is that those companies chose to share a public-facing toolkit. What leaked here is Anthropic’s full internal product, including unreleased features, strategic decisions, and business logic they clearly never intended to share.

The code itself can be rewritten. But the strategic surprises — what’s coming, how the product works behind the scenes, what Anthropic is worried about — can’t be un-leaked. This was Anthropic’s second accidental exposure in the same week, and it raises real questions about internal processes at one of the world’s leading AI companies.

Full original analysis: The Claude Code Source Leak by Alex Kim.

Blog

This section provides an overview of the blog, showcasing a variety of articles, insights, and resources to inform and inspire readers.

-

North Korean Hackers Behind Major Attack on a Tool Used by Millions of Developers

A popular software tool used by developers around the world was briefly hijacked by suspected…

-

What Anthropic’s Claude Code Source Leak Revealed

Anthropic accidentally shipped their full Claude Code source code inside an npm package. It was…

-

The Impact of Tech on Daily Life: A Comprehensive Review

This paragraph serves as an introduction to your blog post. Begin by discussing the primary…

Leave a Reply